Introduction

There is a number that data teams love to present in boardrooms. It usually sits somewhere between 88% and 96%, and it is always followed by a confident pause. That number is model accuracy. And in most organizations, it has become the primary proof that an AI project is working.

It is also one of the most misleading metrics in enterprise technology.

Not because accuracy is unimportant. It is. But because accuracy measures what the model does in isolation. It says nothing about what the organization does as a result. And in the gap between those two things, billions of dollars of AI investment quietly disappears every year.

The real question is not whether your model is right. The real question is whether your decisions got better.

The POC Trap: When Success Stops at the Slide Deck

Most AI projects have a moment of genuine triumph. The proof of concept clears the threshold. The model performs well on holdout data. Leadership nods approvingly. Someone makes a slide that says 94% accuracy in a large font.

Then the project goes to production.

And nothing changes.

Not the workflow. Not the incentives. Not the conversation between an underwriter and their manager when a borderline risk lands on their desk. The model sits in the system, quietly being accurate, and the humans around it continue deciding exactly as they always have.

This is not a technology failure. It is a system design failure. The model was built. The decision environment was not redesigned around it. Those are two completely different problems, and most AI project plans only solve the first one.

The POC proved the model works. It never proved the organization would change.

Accuracy vs. Action Rate: The Metric That Actually Matters

Here is a thought experiment worth sitting with.

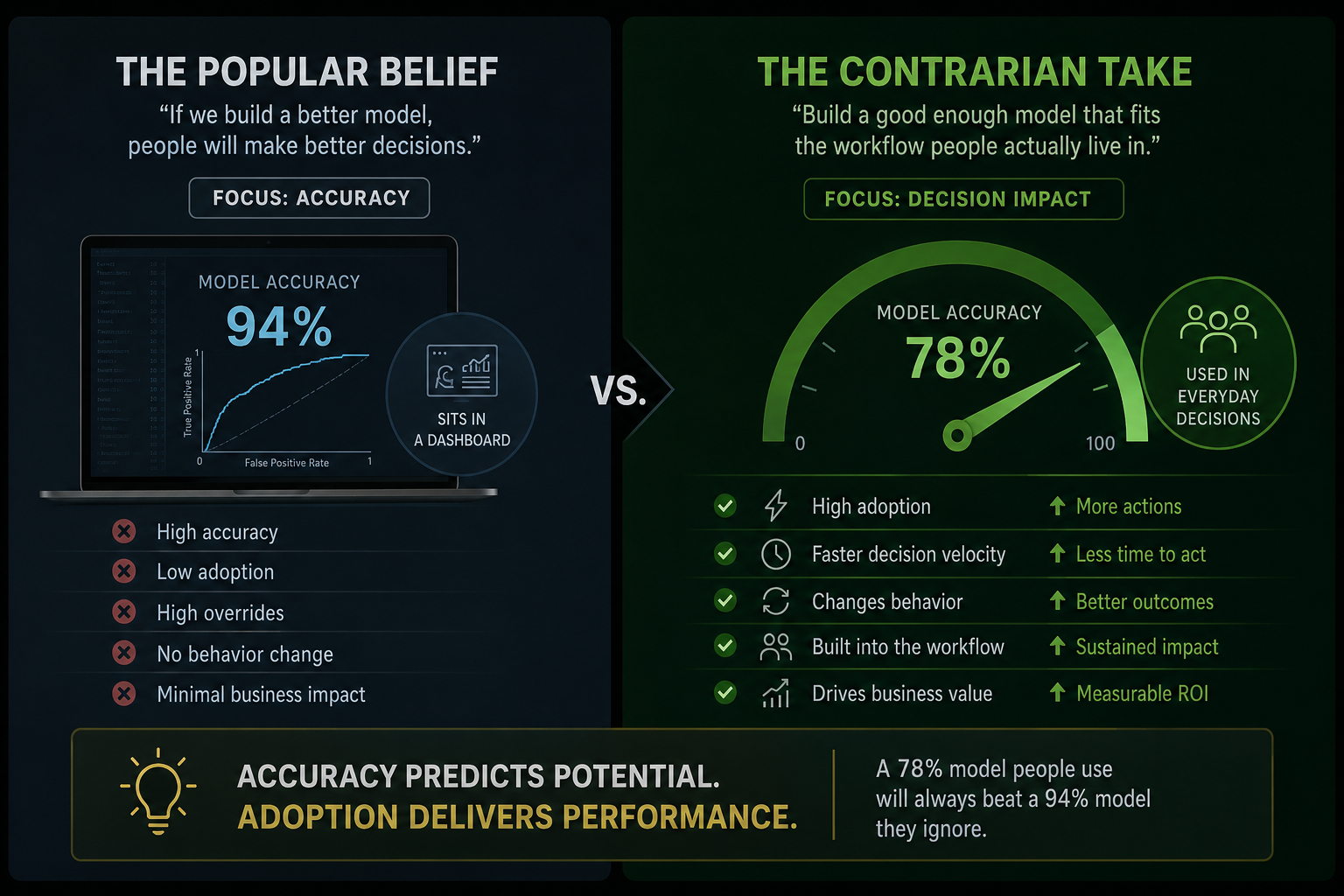

You have two models. Model A is 94% accurate. It lives in a dashboard that your team checks three times a week, sometimes. Model B is 78% accurate. It is embedded directly into the claims workflow, surfaces a recommendation at the exact moment a decision is being made, and the team has been trained to act on it within 24 hours.

Which one improves your business outcomes?

Model B. Every time. By a significant margin.

This is the core insight that most AI strategies miss. Accuracy is a model metric. Decision velocity and action rate are business metrics. Decision velocity measures how fast a prediction becomes an actual decision. Action rate measures how often the system changes what someone does versus what they would have done anyway.

A high-accuracy model with a low action rate is not an AI system. It is an expensive report.

If you want to measure whether your AI is actually working, stop looking at the confusion matrix. Start asking: how many decisions changed this week because of this model, and how fast did that happen?

The Insurance Problem: Why Underwriters Override and What It Reveals

Insurance is one of the most data-rich industries on the planet. It is also one of the most instructive examples of the accuracy-action gap in practice.

Underwriters in many markets override AI recommendations at rates that would surprise most data teams. Not occasionally. Consistently. And the instinct in most organizations is to treat this as a trust problem, a change management problem, or in some cases, a people problem.

It is usually none of those things.

It is a system design problem.

When AI recommendations arrive without context, without an explanation of what drove the score, without a feedback loop that shows the underwriter what happened the last time they overrode a similar recommendation, the rational response is skepticism. The model may be right. But the system gave the human no reason to believe it, no way to verify it, and no consequence for ignoring it.

Trust in AI is not built through accuracy alone. It is built through transparency, feedback, and workflow integration. An underwriter who can see why the model flagged a risk, who receives a monthly summary of their override outcomes, and whose workflow makes acting on the recommendation the path of least resistance, will use the system differently than one who sees a score and nothing else.

The override rate is not a measure of resistance. It is a measure of how well the system was designed to be trusted.

What to Fix: The Decision System Audit

If your AI project is live but your decisions have not changed, here is where to look before you retrain the model.

First, map the decision moment. Where exactly does a human make the call that your model is trying to inform? Is the recommendation present at that moment, in that interface, in a form that is immediately usable? If the answer is no, the model is not in the decision system. It is adjacent to it.

Second, measure action rate by user. Not overall. By person. You will almost always find that a small number of people are using the model consistently and getting value. Understanding what they do differently is worth more than any hyperparameter tuning.

Third, build a feedback loop that is visible to the user. If someone overrides the model, what happened? Did the claim go well? Did the risk materialize? People change behavior when they can see the consequence of their choices. A model that gives a score and then goes silent is a model that will be ignored.

Fourth, check the incentive structure. If an underwriter is rewarded for speed and volume, and using the AI recommendation requires additional steps or documentation, they will skip it. Not because they distrust AI. Because the system is not aligned with how they are measured.

Your model accuracy is probably fine. Your decision system is probably where the value is being lost.

Conclusion: The Finish Line Was Never the POC

The most important shift in AI strategy right now is not about better models. It is about better decision systems.

A decision system is not just a model. It is the model plus the workflow it lives in, the people who interact with it, the feedback loops that make it trustworthy over time, and the incentives that make acting on it the natural choice.

When you design for accuracy, you build a model. When you design for action, you build a system.

The organizations that will get durable value from AI are not the ones with the highest model performance. They are the ones that asked a harder question from the beginning: not whether the model is right, but whether anything will change because of it.

That is the question worth obsessing over.

Decision rule to carry forward: Before signing off on any AI project, ask three things. Where exactly does the recommendation appear in the workflow? What is the expected action rate in month one? And what feedback does the user receive after they act or override? If you cannot answer all three, the project is not ready for production.