Introduction: Not a Competition, a Comparison

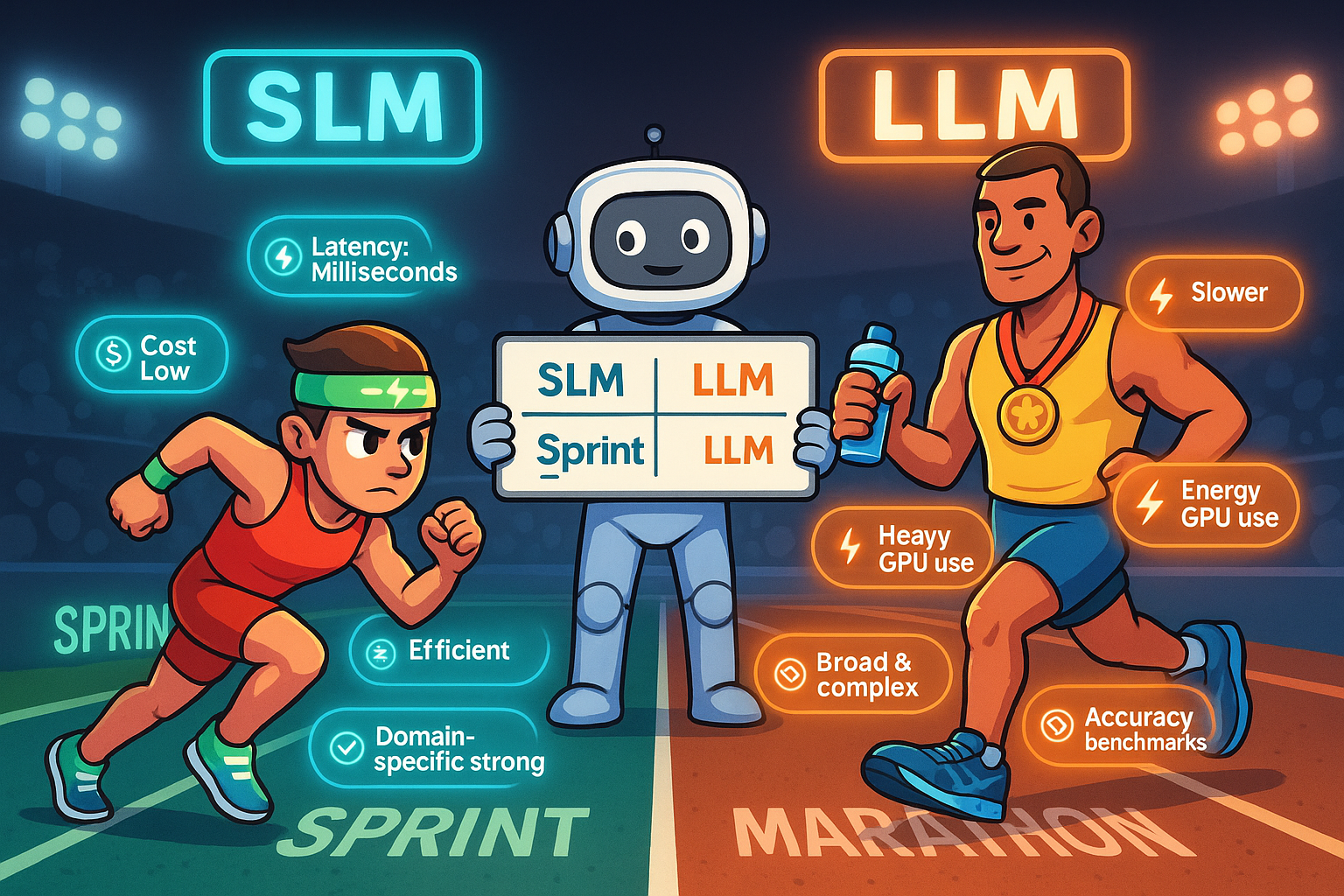

The framing of SLM versus LLM as a competition misses the point. These are different tools designed for different conditions, each genuinely superior in the contexts where it fits best.

Where LLMs Win

Large Language Models have genuine, durable advantages in specific dimensions. Breadth of knowledge is the most significant: an LLM trained on a vast corpus of human-generated text can engage meaningfully with almost any topic and synthesise across domains.

Complex multi-step reasoning is another LLM strength. Tasks that require holding many pieces of information in working memory simultaneously benefit from the capacity that larger models provide.

Generative versatility, the ability to write code, compose prose, generate creative content, and adapt to wildly different output requirements, is where LLMs genuinely shine.

Where SLMs Win

Latency is the most immediately measurable SLM advantage. On-device or edge-deployed SLMs respond in milliseconds. Cloud-based LLMs require network round trips that add hundreds of milliseconds to every inference.

Cost is the advantage that matters most over time. LLM inference at production scale is expensive. SLM inference is dramatically cheaper.

Domain-specific accuracy after fine-tuning is perhaps the most counter-intuitive SLM advantage. A small model fine-tuned on highly relevant domain data consistently outperforms a large general-purpose model on those specific tasks.

Privacy and control round out the SLM advantages in environments where data governance requirements apply.

The Complementarity Insight

The most strategically sophisticated organisations are not asking whether to use SLMs or LLMs. They are building systems that use both, directing each type of model to the tasks where its advantages are decisive.

Conclusion

The right model is not the most impressive model. It is the most appropriate model for the specific task, deployment environment, and economic constraints at hand. LLMs win the marathon. SLMs crush the sprint.